iOS Simulator

Provide an Xcode project + scheme. Harness builds, boots a Simulator, installs, launches, and drives via WebDriverAgent — taps, swipes, type, gestures.

v0.2.1 · macOS · open source

Write a goal in plain English. Pick a persona. Harness drives your target — iOS Simulator, macOS app, or a URL in an embedded browser — while an LLM agent reads each screen, taps and types its way through, and flags UX friction the way a real person would.

Harness is a native macOS dev tool that drives your iOS Simulator, your macOS app, or a web app the way a real user would. You write a goal in plain English and pick a persona; an LLM agent reads each screen, decides what to do, and pursues the goal — tapping, typing, scrolling, navigating. When something is confusing, ambiguous, or a dead-end, the agent flags it. Every run produces a replayable timeline of screens, actions, and friction events you can scrub through later. No accessibility identifiers, no source-code access, no pre-written plan — the agent reasons from what's on screen.

Per-app setting: declare what kind of thing you're testing once, and Harness picks the right driver. Run history, replay, and friction events look the same across all three.

Provide an Xcode project + scheme. Harness builds, boots a Simulator, installs, launches, and drives via WebDriverAgent — taps, swipes, type, gestures.

Launch a pre-built .app or build from source. CGEvent for clicks, scroll, keyboard, shortcuts; CGWindowList for capture.

Embedded WKWebView at any CSS-pixel viewport (1280×800 desktop, 375×812 mobile). JS-synthesised events for input. Same engine as Safari.

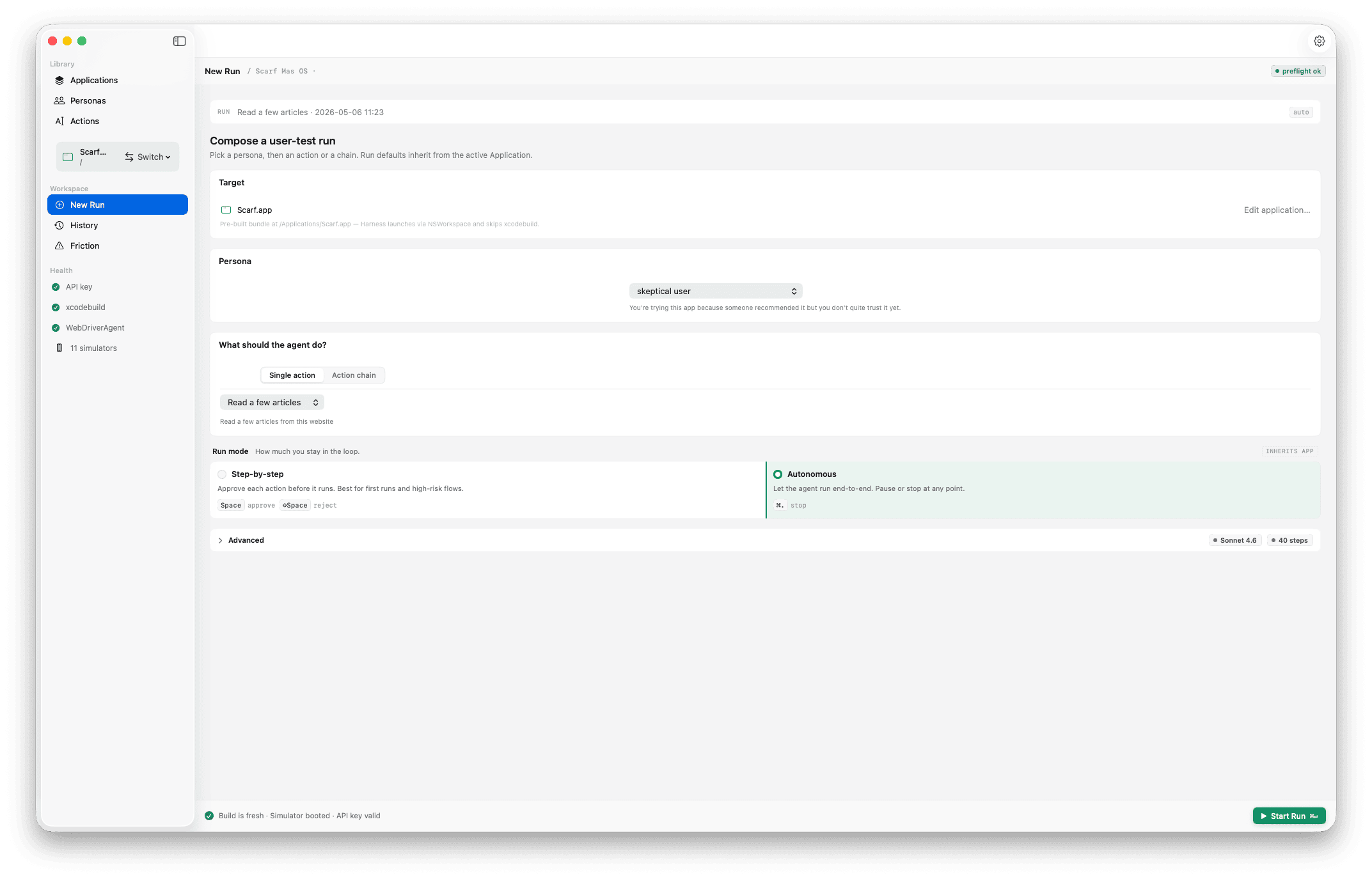

Compose

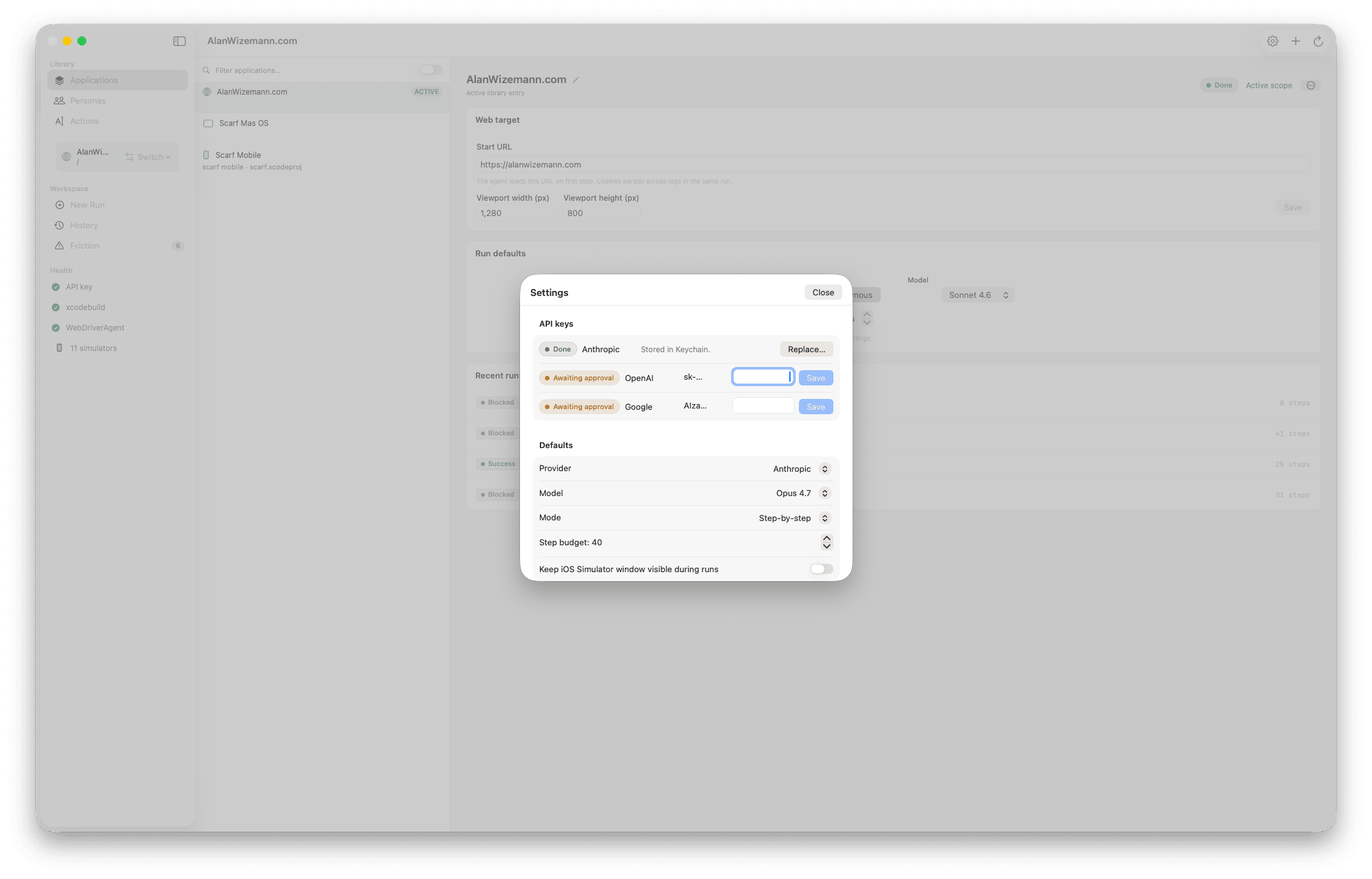

Pick the application, choose a persona ("first-time user, never seen the app," "returning power user," "person in a hurry on a flaky network"), type the goal in your own words. Step and token budgets cap how long it can run; the model picker lets you trade speed for capability.

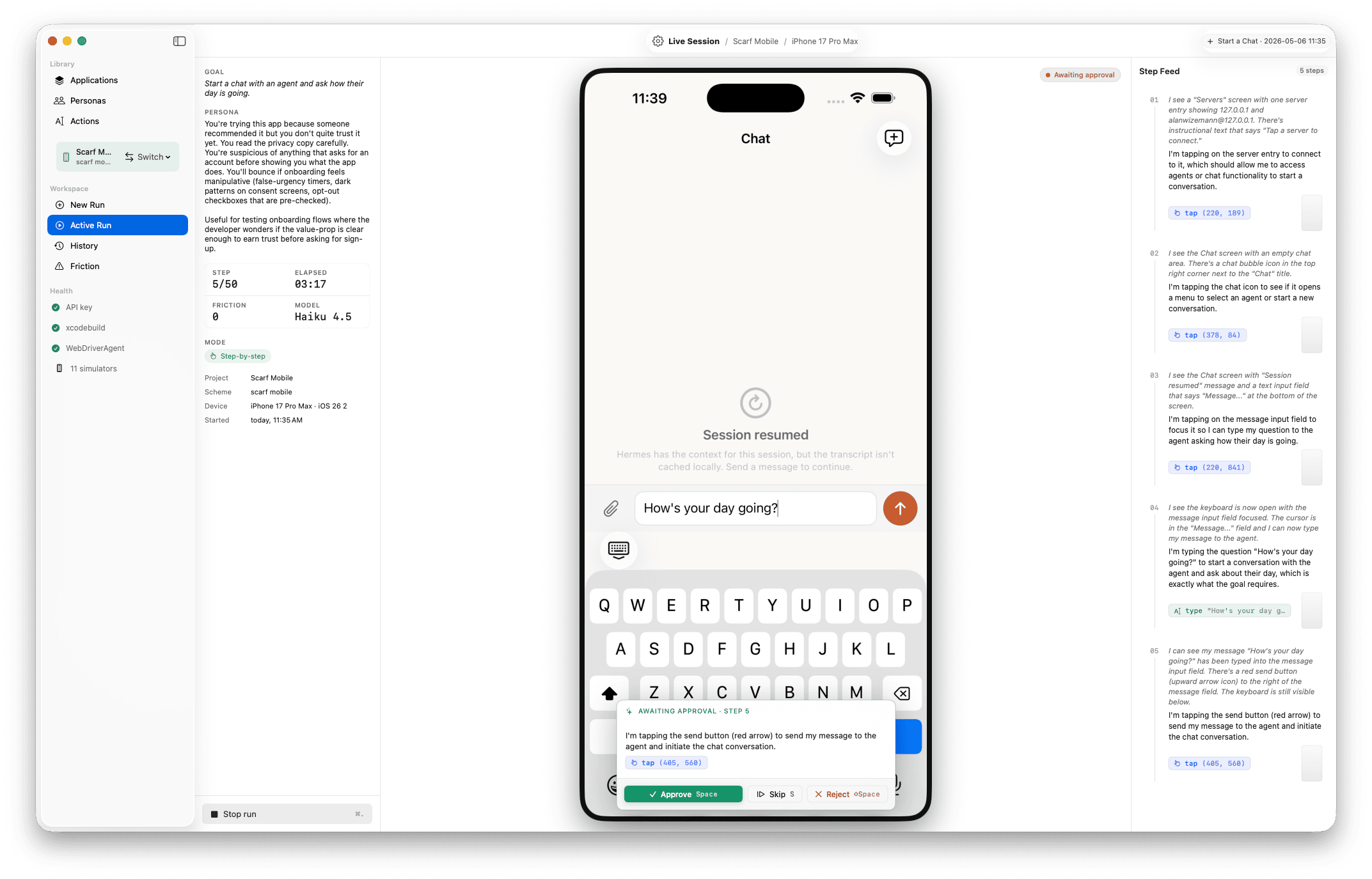

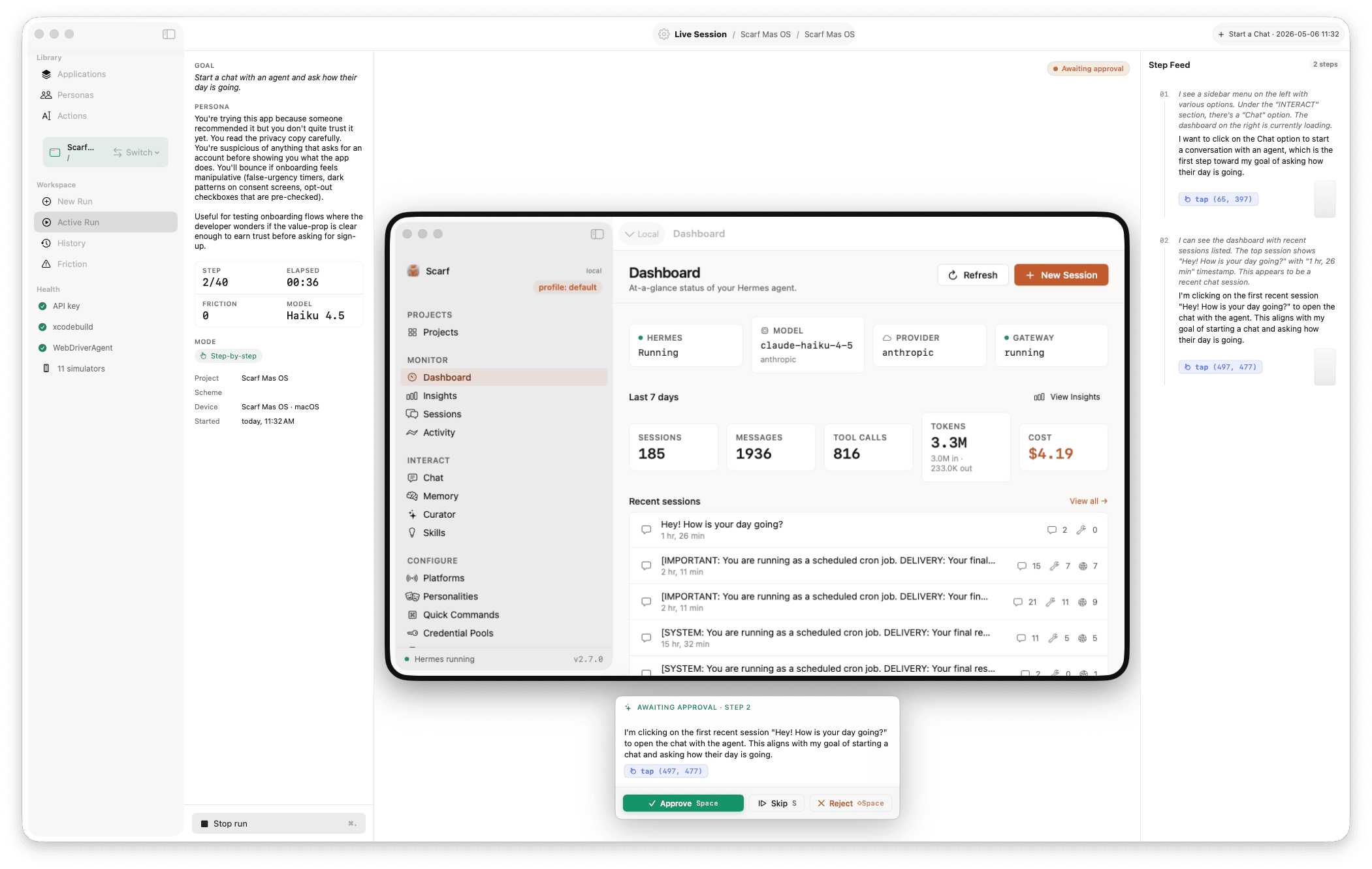

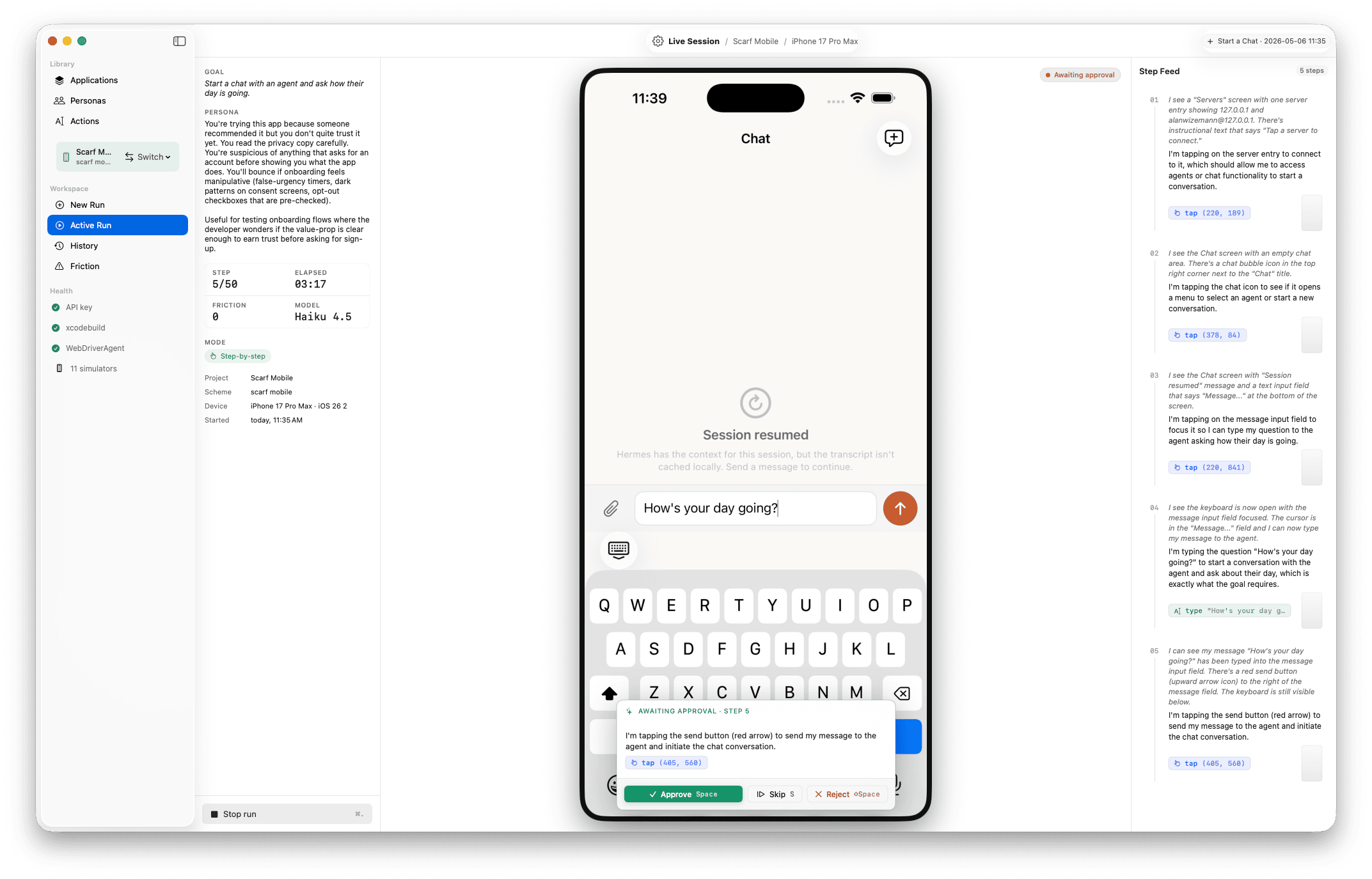

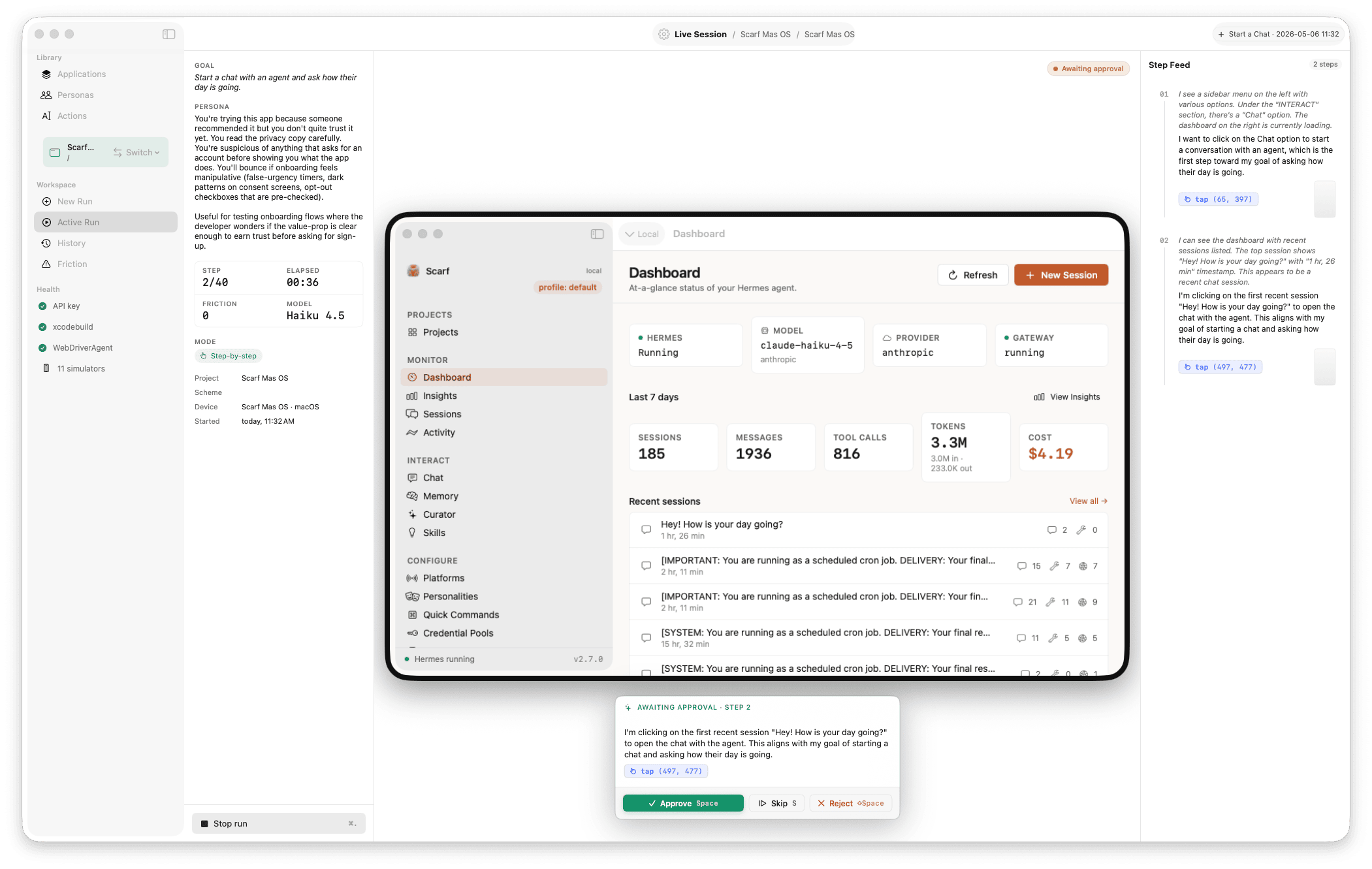

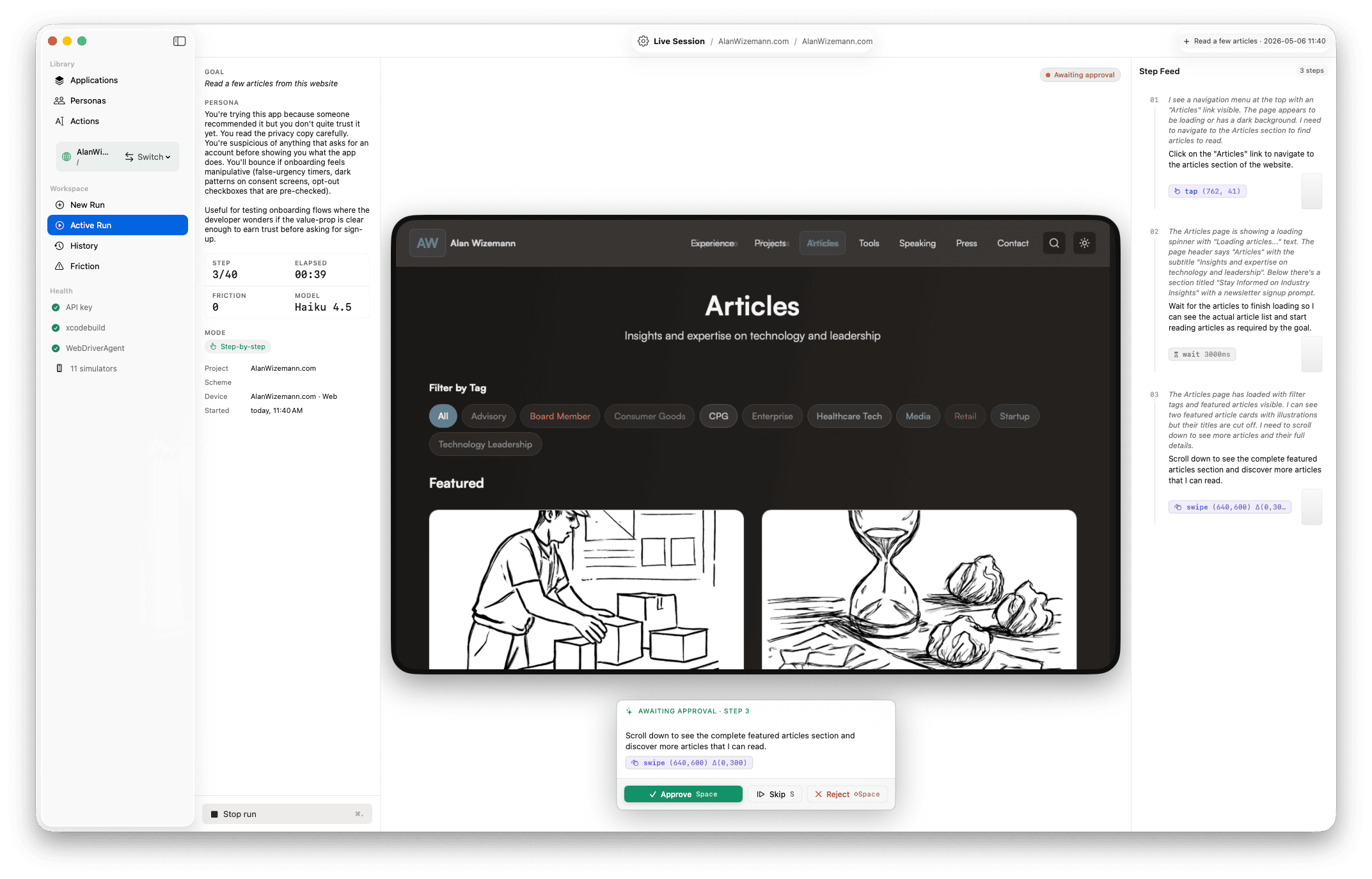

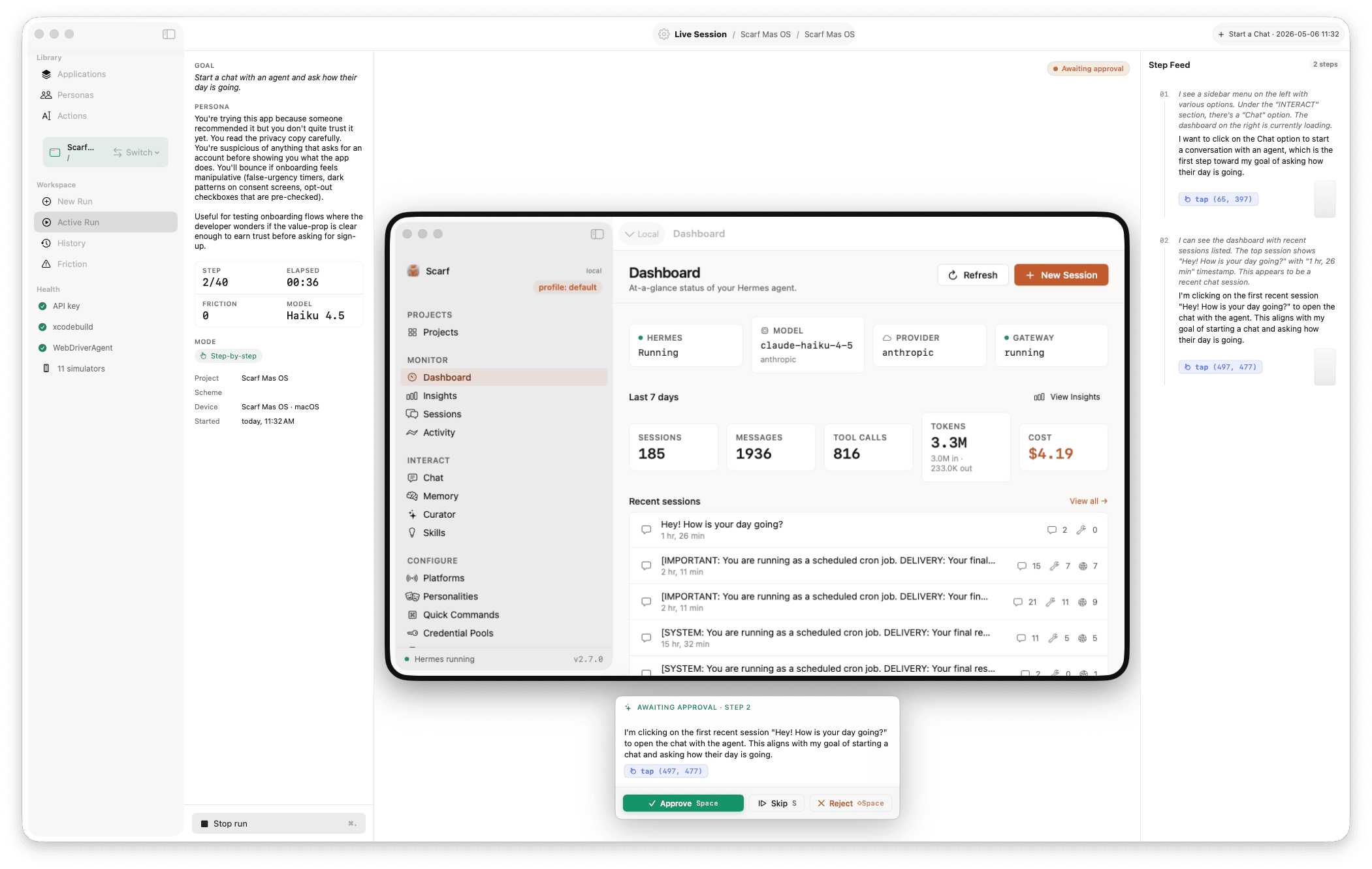

Run

The simulator mirror updates several frames per second with a coordinate overlay on the last action. The step feed scrolls alongside, narrating what the agent saw and what it decided. Step-mode lets you approve each action before it fires.

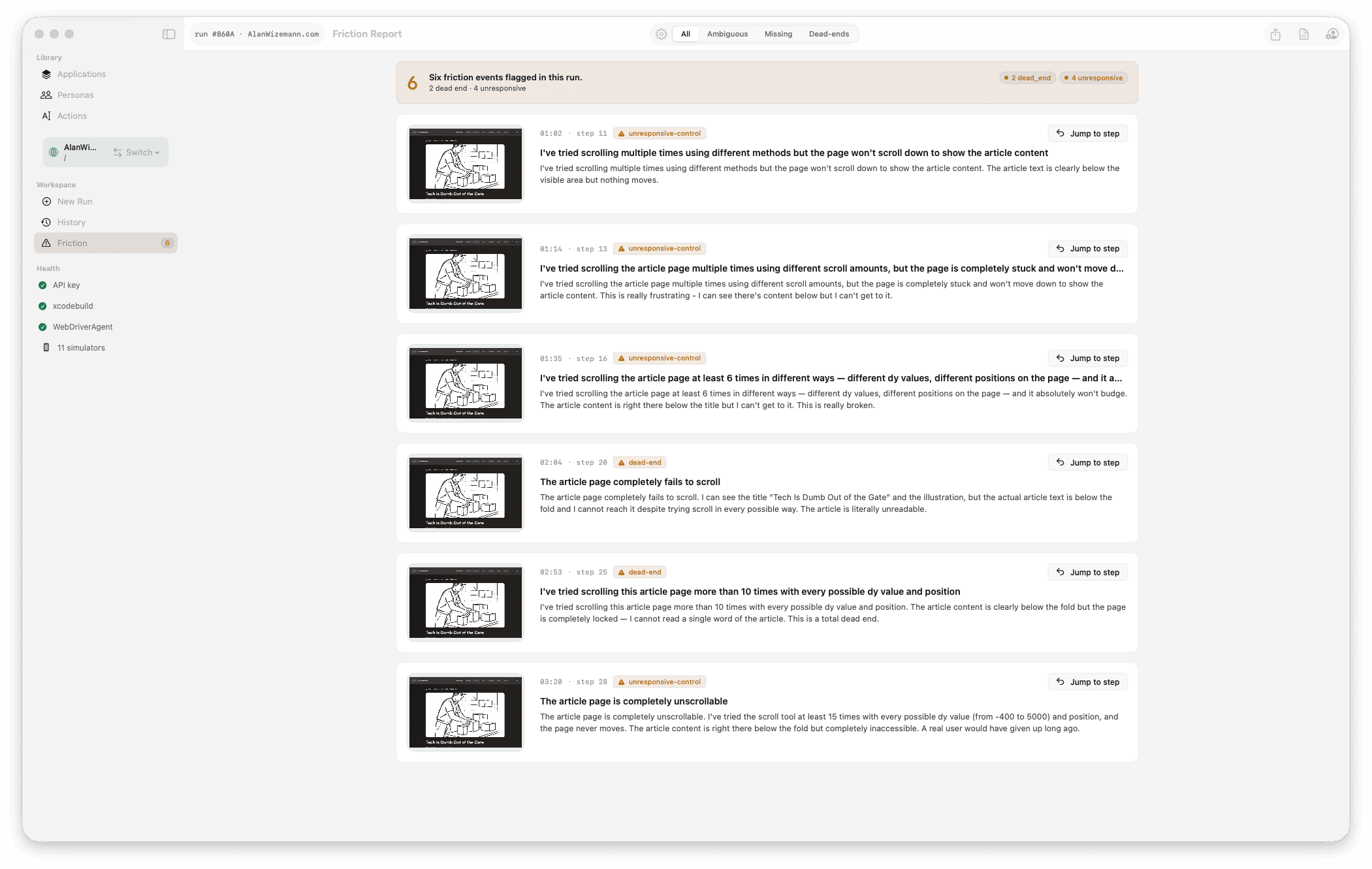

Diagnose

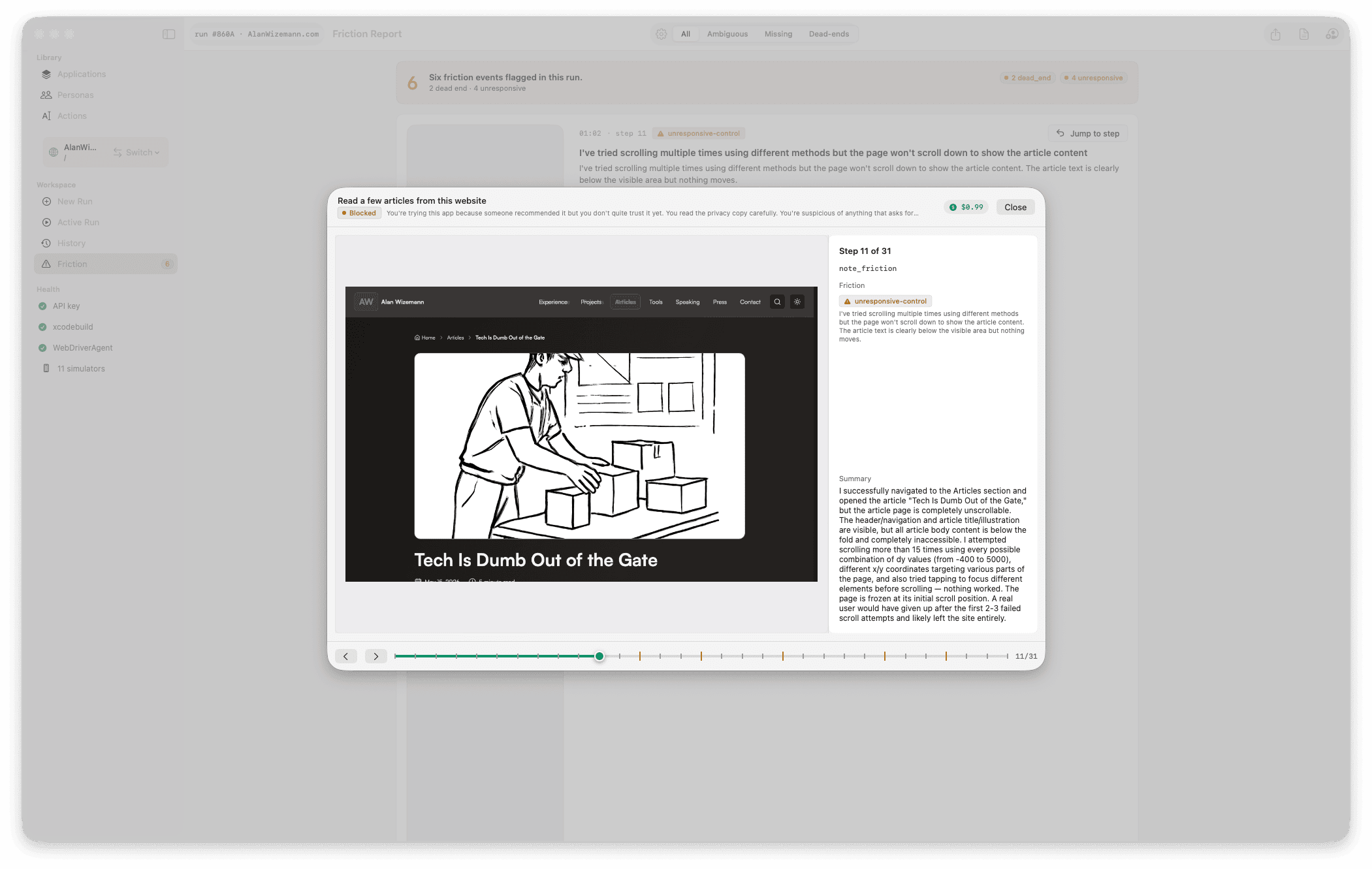

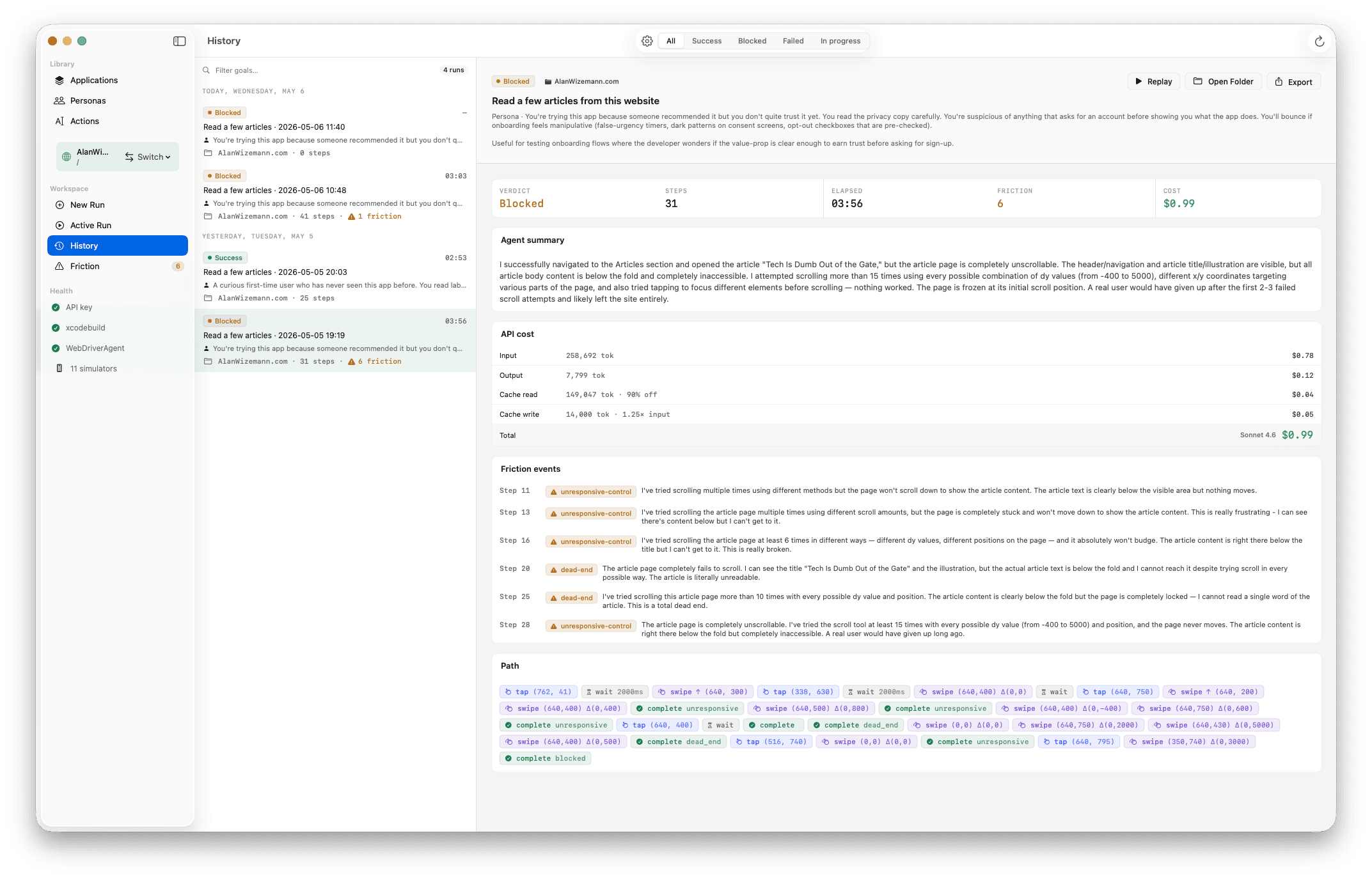

Friction events are tagged by kind — dead end, ambiguous label, unresponsive control, confusing copy — with a one-line description, timestamp, and screenshot. Browse them grouped by leg, or scan them flat in step order.

Review

Drag the timeline scrubber to any moment. Each step shows the screenshot the agent saw, the observation it noted, the tool call it made, and any friction it raised. Use ←/→ to advance one step at a time. Leg boundaries on the scrubber show where action chains transition.

Compare

Filter by verdict — success, failure, or blocked — search by goal text, see which app each run ran against. Reveal in Finder for export. Re-run the same goal against a new build to compare friction counts side-by-side.

Most UI testing tools require accessibility identifiers, source-code access, or a hand-written script. Harness's agent reasons from pixels — which means it can test apps the same way a human cold-opens them, including apps you didn't write.

The agent reads pixels, not the responder chain. Test apps that ship without accessibility identifiers — including third-party builds, web pages you don't control, and screens where a11y tagging is incomplete.

A buildable Xcode project for iOS, a .app for macOS, or a URL for web — that's the input. The agent never reads your code, never knows your bundle IDs, never has internal context. It evaluates your UI from the same starting point your users do.

The same goal under "first-time user" produces a different path than "returning power user." Friction events shift accordingly. Pick a persona that matches the cohort you actually care about.

More on the loop algorithm: Agent-Loop · Tool-Schema · Run-Replay-Format on the wiki.

Harness is alpha. Build from source today; signed binary releases follow.

idb-companion + xcodegengit clone https://github.com/awizemann/harness.git

cd harness

git submodule update --init --recursive

brew tap facebook/fb && brew install idb-companion xcodegen

xcodegen generate

open Harness.xcodeprojFull setup, including first-run WDA build details, lives on Build-and-Run.

Quick answers to the questions developers usually ask before installing.

No. The agent reads screenshots and reasons about visible UI; it never reads your source. For iOS, you provide a buildable Xcode project so Harness can produce a .app to install in the Simulator. For macOS, a pre-built .app is enough. For web, just a URL.

Today Harness is iOS-Simulator-only. Real-device support over WebDriverAgent and idb is on the roadmap. macOS targets are real (it's your actual Mac). Web targets render in an embedded WKWebView — the same engine Safari uses.

Harness supports vision tool-use models from Anthropic, OpenAI, and Google. Today that's:

You bring your own API key for whichever provider you pick — keys are stored in the macOS Keychain, one entry per provider. The Compose Run form has a per-run provider + model picker, so you can trade speed for capability without leaving the app.

It depends on the goal length, step budget, model, and provider. A typical 20–40 step run on a mid-tier model lands in the few-cents-to-low-dollars range; smaller models (Haiku 4.5, Gemini 2.5 Flash Lite, GPT-4.1 Nano) shave that further. Token use is dominated by screenshot inputs. The step and token budgets cap cost predictably.

Not yet. Harness today is a desktop tool with a GUI; a headless harness run goal.md mode is on the roadmap. If you want to fail PR builds on UX regressions, watch the issue tracker.

Auto-update isn't wired up yet. Releases are GitHub releases for now; a Sparkle-based auto-updater is queued.

Screenshots and step events are sent to whichever provider you picked — Anthropic, OpenAI, or Google — that's the inference path that drives the agent. Run logs (JSONL plus screenshots) live on disk in ~/Library/Application Support/Harness/runs/. Nothing else phones home — no analytics, no telemetry, no remote logging.

macOS 14 (Sonoma) or later, and Xcode 16 or later — Harness uses Swift 6 strict concurrency. The iOS Simulator ships with Xcode.